Steering NLP-generated output

Steering NLP-generated output

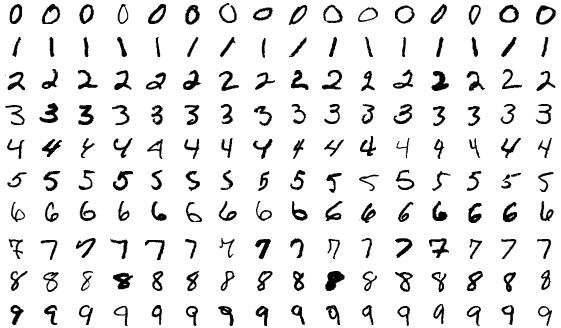

Astounding though have been the achievements by GPT-2 and BERT, these text prediction systems still produce output that is only apparently meaningful. So they produce fluent sentences, and let the output run for a while and incoherency starts rising. Staying on topic isn’t easy. Data scientists have developed a way to steer output from GPT-2.

The system comprises two modules, the first is the original model, GPT-2, which predicts words based on statistical patterns of words occurring together. This output is fed into a second statistical model, evaluates the output on a pre-defined attribute – in this case, how well it stays on topic. The second models scores the output as each word is predicted, which provides a corrective loop for the first system.

This two-system model can combine multiple goals and provide the much-needed flexibility, so multiple new applications become possible. For instance, in translation, we can train the second model on language-specific rules. This technique shows that way how we can avoid expensive retraining on topic or context-specific datasets, and yet acquire more control over the output of pre-trained models such as GPT-2.

Read the full article here at MIT Technology Review.

Image Source: Shutterstock