Deep learning models to find causality?

Deep learning models to find causality?

The Holy Grail for machine learning models is whether a model can infer causality, instead of finding correlations in data. Well, it isn’t like this is a big focus area among researchers currently, but it is a fascinating challenge.

A researcher at Facebook, Leon Bottou, presented an interesting framework that shows a path forward. His idea is elegant: if we can find a way to get rid of all the “spurious” correlations in a model, then what we are left with are “invariant” relationships. If those hold true in different conditions, isn’t that a description of causality?

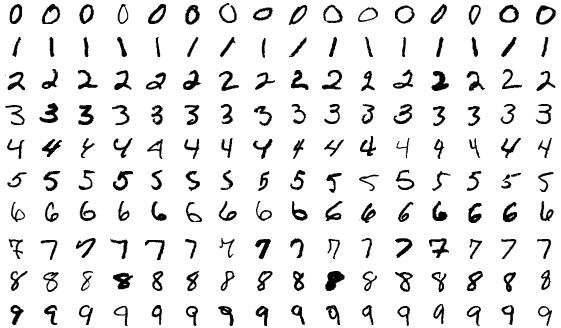

He and his team applied this approach to the classic computer vision system that recognizes handwritten numbers. They first introduced color of the numerals as additional features, and the model learnt, apart from the shape of the digits, to also “spuriously” use colors as features– and therefore make poor predictions.

Then, the team trained on two datasets separately, each with a different color set. This time model output was neat: it learnt to disregard color as a predictor, and the prediction accuracy became healthy again. Isn’t shape then the “invariant” feature? It’s an interesting path to pursue. For more details on what Bottou and his team did, read here.

Image Source: MNIST sample image, Wikipedia