Overfitting: a new perspective

Overfitting: a new perspective

We have a used car price prediction model, where for the past 3 months we seem to have hit an RMSE limit. Different algorithms, intensive hyperparameter tuning, intuitive feature engineering all yield nothing more than minor improvements. And then we realized that have been training on updated data sets on new listings of used cars every month. What if we are torturing our model to seek structure in what isperhaps a random structure? After all, used car market sees a lot of dynamic fluctuations in prices, based on a host of macro and market factors.

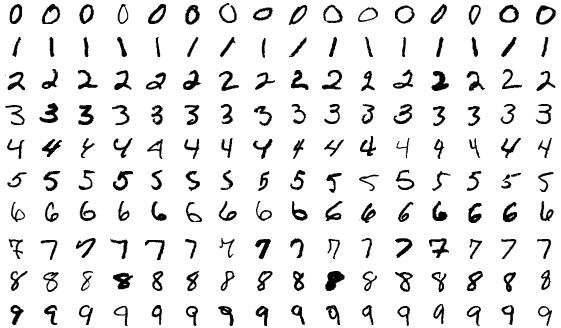

Alex Hayes in this blog article makes an interesting point about modeling, which is nothing but trying to see some structure in data. When we see structure, it could originate from two sources – as he calls it, systematic structure that is inherent in the data generating process, and apparent structure that comes from the randomness of the data generating process.

The key point he makes is that humans have poor intuition for segregating the two [See how you fare in his coin flip tests in the article]. When we see structure, we assume it is non-random, and therefore useful to study and leverage. So what we call Overfitting in ML parlance, we need to remind ourselves that structure in data could be random also. If a model performance starts plateauing, that isn’t necessarily a bad thing.

Image Source: Shutterstock