Ernie: New type of masking to understand language meaning

Ernie: New type of masking to understand language meaning

Baidu recently beat Google and Microsoft in ongoing competition, General Language Understanding Evaluation, or GLUE. And in the process, it beat the comprehension score on GLUE by an average person also, clocking 90 points against a person’s score of 87 points.

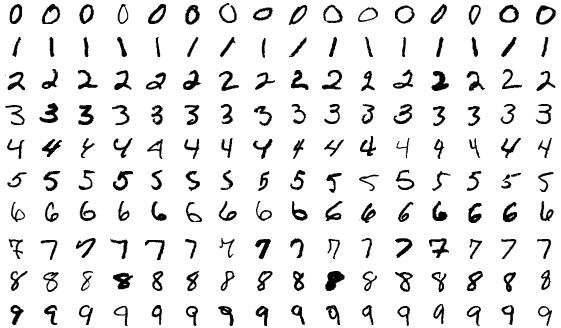

This it did by implementing a new approach to masking than what BERT implemented. In masking, the model learns by hiding, or masking, some words in a sentence – and the model learns by figuring out the context before and after the masked word. That’s the big deal that BERT accomplished.

Baidu had a different challenge. In the Chinese language, a word is an amalgamation of strings, and most words do not have any meaning unless specific combination of strings come together. So Bernie was trained to learn context, before and after, by masking strings (rather than words). And when it did, the Ernie team found out that the model performed better for English too (which also often has specific strings that only work together, think a phrase like, “never say die”). So Ernie seems to learn the “meaning” rather than decipher statistical patterns in words usage.

That opens up new possibilities in translation also. Read the full article here on MIT Technology Review.

Image Source: Shutterstock